Use Omni's cubic regression calculator whenever you want to fit the cubic model of regression to a dataset. With its help, you'll be able to quickly determine the cubic polynomial that best models your data. If you need to learn more about this technique, scroll down to find an article where we give the cubic regression formula, explain how to calculate cubic regression by hand, and illustrate all this theory with an example of cubic regression!

Definition of cubic regression

In general, regression is a statistical technique that allows us to model the relationship between two variables by finding a curve that best fits the observed samples. If this curve corresponds to a polynomial, we deal with the polynomial regression, which you can discover in the polynomial regression calculator.

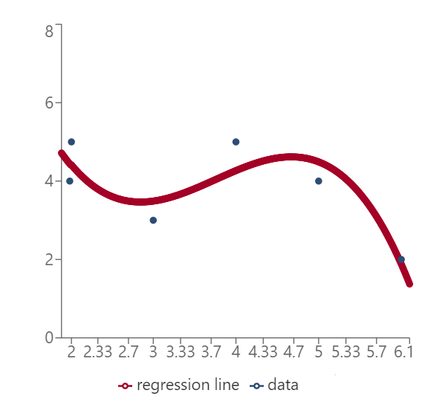

In the cubic regression model, we deal with cubic functions, that is, polynomials of degree 3. You can see an example in the picture below. The idea is the same as in other regression models, like linear regression, where we try to fit a straight line to data points, or quadratic regression, where we deal with parabolas. You can discover more about them in the dedicated Omni tools, that is the linear regression calculator and the quadratic regression calculator.

As we now understand the cubic polynomial regression model, so let's discuss the cubic regression formula.

The formula for cubic regression

To discuss the cubic regression formula in a more formal way, we need to introduce some notation. Let us, therefore, consider a set of data points:

(x1,y1), ..., (xn,yn).

The cubic regression function takes the form:

y = a + bx + cx² + dx³,

where a, b, c, d are real numbers, called coefficients of the cubic regression model. As you can see, we model how the change in x affects the value of y. In other words, we assume here that x is the independent (explanatory) variable and y is the dependent (response) variable.

- If

d = 0, we obtain quadratic regression; and - If

c = d = 0, then we get a simple linear regression model.

And that's it when it comes to the cubic regression equation! The main challenge now is to determine the actual values of the four coefficients. To find the coefficients of the cubic regression model, we usually resort to the least-squares method. That is, we look for such values of a, b, c, d that minimize the squared distance between each data point:

(xi, yi),

and the corresponding point predicted by the cubic regression equation:

(xi, a + bxi + cxi2 + dxi3).

"OK, but this doesn't help that much in finding these values", you're probably thinking, and we completely agree. In what follows, we discuss how to determine the coefficients in cubic regression function by hand. A quicker solution is, of course, to use Omni's cubic regression calculator 😉.

How to use this cubic regression calculator?

Here's a short instruction on how to use our cubic regression calculator:

- Input your sample — up to 30 points. Remember that the calculator needs at least 4 points to fit the cubic regression function to your data!

- The calculator will display the scatter plot of your data and the cubic curve fitted to these points.

- Below the scatter plot, you will find the cubic regression equation for your data.

- If you need the coefficients computed with a higher precision, use the

Precisionfield to change the number of significant figures.

How to find cubic regression by hand

It's high time we discussed how to compute the coefficients of cubic regression by hand. We'll use the projection approach, which is a very quick method as it uses matrix operations.

Let us introduce some necessary notation:

- We let

Xbe a matrix with four columns andnrows, wherenis the number of data points. We fill the first column with ones, the second with the observed values x1, ..., xn of the explanatory (independent) variable, the third with squares of these observed values, and the fourth with cubes of these observed values:

This matrix is often called the model matrix.

- We let

ybe a column vector containing the values y1, ..., yn of the response (dependent) variable:

- We let

βbe the column of the coefficients of the cubic regression model that we're looking for:

Keep in mind that the order matters — start with a at the top and finish with d at the bottom!

Now, to determine the actual values of the coefficients, we just use the so-called normal equation:

β = (XTX)-1XTy

where:

-

XT — Transpose of X;

-

(XTX)-1 — Inverse of XTX; and

-

The operation between every two matrices is matrix multiplication.

⚠️ Keep in mind that for some very peculiar datasets, the inverse of XTX might not exist. If this happens, you cannot fit the cubic polynomial regression to this data.

As you can see, it's not that hard to find cubic regression by hand, yet there are some challenges along the way. To get a better grasp of how to do all these computations in practice, let's solve an example of cubic regression together.

Cubic regression example

Let us find the cubic regression function for the following dataset:

(0, 1), (2, 0), (3, 3), (4, 5), (5, 4)

Here are our matrices:

- The matrix

X:

- The vector

y:

We apply the formula step-by-step:

- First, we determine XT:

- Next, we compute XTX:

- Then, we find (XTX)-1:

- Finally, we perform the matrix multiplication (XTX)-1XTy. The linear regression coefficients we wanted to find are:

-

Therefore, the cubic regression function that best fits our data is:

y = 0.9973 - 5.0755x + 3.0687x² - 0.3868x³

As you can see, to find the cubic linear regression formula by hand, we need to perform a lot of calculations. Thankfully, there's Omni's cubic regression calculator 😊!

FAQs

What is cubic regression?

Cubic regression is a statistical technique finds the cubic polynomial (a polynomial of degree 3) that best fits our dataset. This is a special case of polynomial regression, other examples including simple linear regression and quadratic regression.

How do I find cubic regression?

To calculate cubic regression, we use the method of least-squares. In practice, we employ the normal equation which employs the model matrix X, involving the independent variable, and the vector y, which contains the values of the dependent variable. Through a series of matrix operations, this equation allows us to find the coefficients of cubic regression.

When do I use cubic regression?

Use a cubic equation when you see in the scatter plot (or you have some prior theory that leads you to believe) that your data follows a cubic curve. Remember, though, that we want our models to be as simple as possible, so, whenever possible, try to fit a simpler model, like simple linear or quadratic regression.

Can I fit cubic regression to 3 data points?

You can fit many (infinitely many, in fact) cubic curves to 3 data points. You need 4 data points to find a unique cubic model. Note, that with 4 points the fit will be perfect, i.e., all the points will lie at the curve!