Gemini Scores Ahead in New ORCA Benchmark, Outpacing ChatGPT Accuracy

Report Highlights

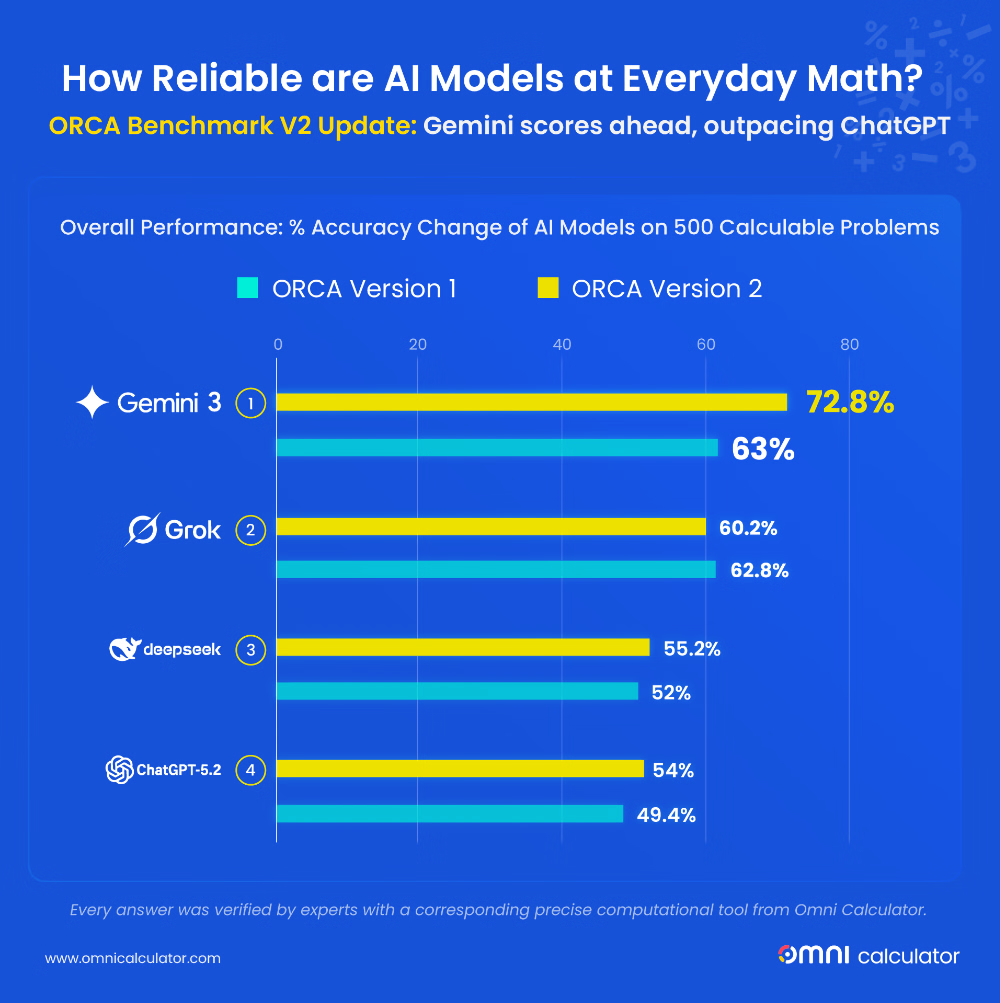

In the second run of the ORCA (Omni Research on Calculation in AI) benchmark, a large-scale test of today’s leading chatbots on real-world quantitative problems, Gemini 3 Flash made a big leap, now solving nearly three-quarters of questions correctly, a milestone no system reached in the first study. Yet this progress comes with a twist: answers can still shift unpredictably. The same question can produce different responses, sometimes subtly, sometimes dramatically. Accuracy is improving, but consistency cannot be guaranteed.

It is a striking contrast. A calculator is predictable. Ask it the same question today or next year, and the answer will never change. AI doesn’t work that way.

We put four leading AIs to the test and created the second iteration of the ORCA Benchmark. Most benchmarks use complex, academic math problems; we built our test around 500 practical questions that everyday people might actually ask.

The chosen models:

- ChatGPT-5.2 (OpenAI);

- Gemini 3 Flash (Google);

- Grok-4.1 (xAI); and

- DeepSeek V3.2 (DeepSeek AI).

If you’ve familiarized yourself with the first edition of our ORCA Benchmark that we published a couple of months ago, you’d see that back then, we tested a model from Anthropic that isn’t listed above. This is because we’re only testing new models that let users use them for free (even if only for a couple of messages per day, like ChatGPT-5.2). Even though Anthropic has dropped the Opus model, it’s not free to use. Furthermore, since their basic, free-to-use model, Claude 4.5 Sonnet, hasn’t been updated since we posted our original benchmark, there was no need to retest the answers.

You might ask: if that’s the case, why was DeepSeek V3.2 tested if it was already V3.2 back in the 1st Omni Benchmark? This one’s tricky because, even though the model name is the same as before, it has actually been tweaked. The one we tested a couple of months ago was considered an alpha test version, while this one is the official, stable version, and the results clearly show that it has improved by more than 3 percentage points since the original test.

We did not use paid-tier or private prototypes. This approach provides a genuine assessment of the mathematical capabilities you can expect from these AI chatbots right now.

Our retest shows a noticeable shift in the AI leaderboard. Gemini 3 Flash made the biggest jump, now correctly answering nearly three-quarters of questions. This result puts it clearly ahead of the other models. ChatGPT and DeepSeek made smaller, steady improvements, gaining just a few percentage points. Grok, on the other hand, slipped slightly. In other words, one model is pulling forward, two are moving at a moderate pace, and one has fallen behind.

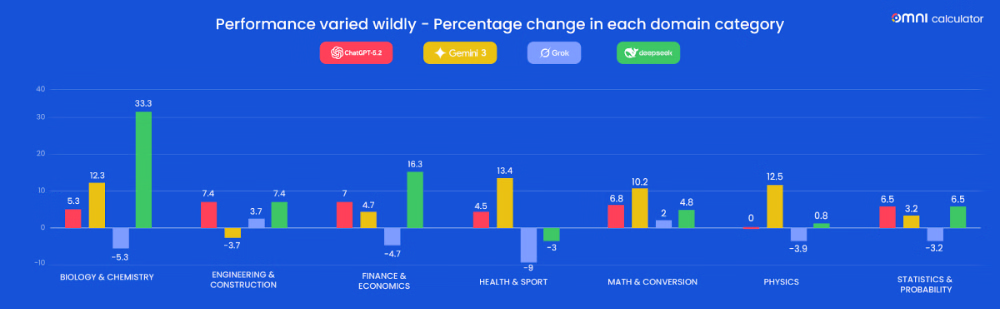

Looking closer at each subject reveals a more detailed story. Gemini 3 Flash made double-digit gains in several areas. It went from 51% to 63% in biology and chemistry, from 46% to 60% in health and sport, from 43% to 56% in physics, and from 83% to 93% in math and conversions. Its only drop was in engineering and construction, falling from 74% to 70%. These results show that progress is strong in many areas, but not uniform across every field.

DeepSeek V3.2 recorded the largest improvement in a single domain, jumping from 11% to 44% in biology and chemistry. It also improved in finance and economics and in engineering and construction, while slipping slightly in health and sport. ChatGPT improved moderately across all subjects, showing steady gains that suggest consistency rather than dramatic leaps.

Grok 4.1 declined in most areas, including biology and chemistry, health and sport, physics, and statistics and probability. A few subjects, such as engineering and construction, and math and conversions, saw minor improvements, but overall, the model seems to be moving backwards.

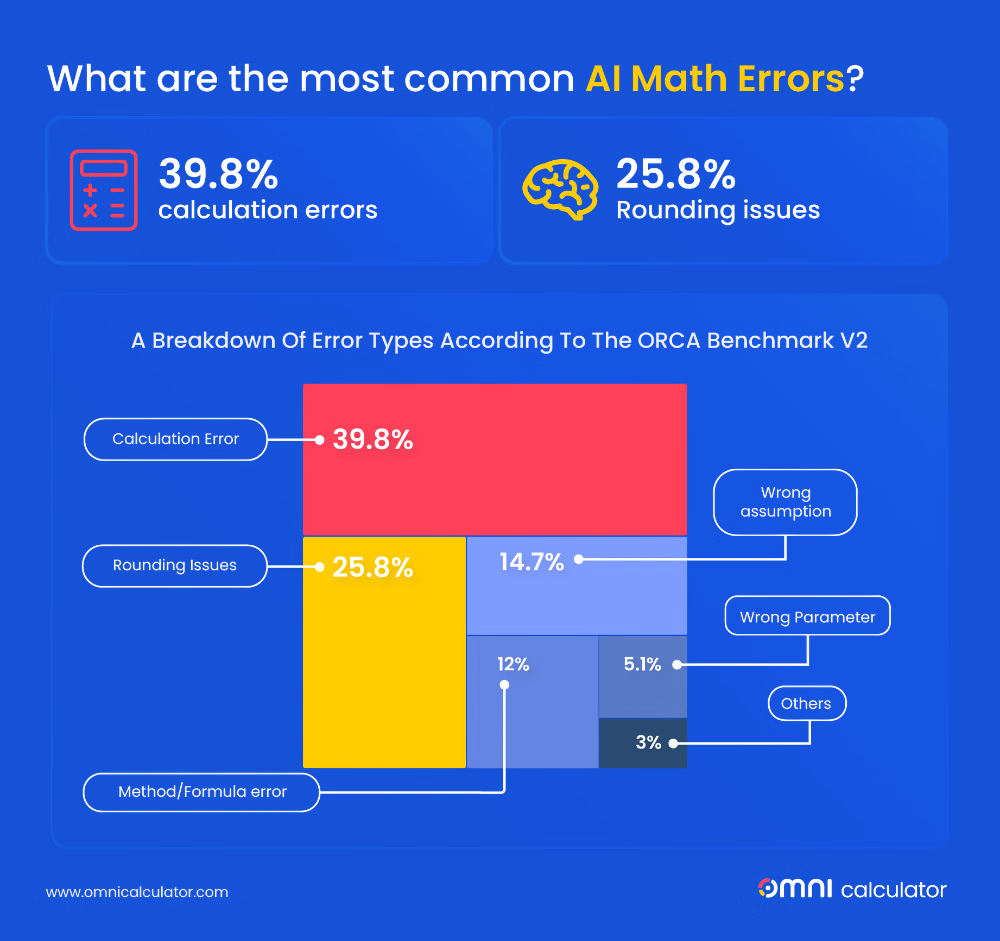

Examining the types of mistakes AIs make reveals how performance is evolving. Across all models, calculation errors are more common now, rising from 33% of all mistakes to 40%. At the same time, precision and rounding errors are less frequent, falling from 35% to 26%. This shows that AIs are getting better at presenting numbers clearly, but the harder challenge of correct computation remains.

Other error types show smaller changes. Wrong assumptions increased slightly, suggesting that models sometimes jump to conclusions. Method and formula errors dropped a little, reflecting modest gains in reasoning. Hallucinations, refusals, and unit mistakes are still extremely rare.

Looking at each model individually:

- ChatGPT reduced method/formula errors by 20% and cut precision mistakes in three (though calculation errors actually ticked up by 12%).

- Gemini 3 Flash made the largest improvements, with calculation errors dropping by 15% and rounding mistakes by 28%.

- Grok and DeepSeek also improved in rounding and precision, although calculation errors remain a challenge for all models.

The core issue is that LLMs don’t actually “do” math — they predict the next likely word or number in a sequence. They can do that even when function calling is turned on, and the LLM writes code to solve the problem.

This architecture leads to a type of fatigue where, during multi-step calculations, a model will prematurely round an intermediate number just to make the sentence flow better. These tiny shortcuts snowball, turning a precise engineering or physics calculation into a rough, and ultimately wrong, estimate. While no model has truly cracked this problem, Gemini 3 Flash is proving to be the best in our benchmark. It avoids the “good enough” more than others, and this is why Gemini remains the leader in this benchmark.

The data suggests that AI is becoming cleaner and more polished in how it presents results, but users still cannot rely on any model for entirely error-free calculations. Fixing formatting is easier than fixing core arithmetic, and the numbers reflect that gap clearly.

LLMs often act like a friend who tries to split a dinner bill in their head and rounds every single number to the nearest ten dollars. This habit of oversimplifying intermediate steps is why they fail at high-precision calculations. While Gemini 3 Flash isn’t perfect with high-precision math, it proved much better than its peers in our testing. For example, it was the only one to give the correct answer for these prompts:

- “What’s my VO2 max if I run 10.564 km in 45:28 minutes?”

- Gemini pinpointed the correct 47.86 mL/kg/min, while DeepSeek missed the mark with a flat 50.

- “What sample size do I need to compute the 99% confidence interval for the proportion when the sample proportion is 0.78, and I need a precision of 0.5%?”

- Gemini nailed the required 45,542, whereas Grok estimated at 45,548.

- “What is the distance (in miles) travelled by an object moving with an initial speed of 400 mi/s and an acceleration of 21.3 g after 2 hours? Give me the answer with seven significant figures.”

- Gemini maintained seven significant figures to reach 6,244,236 mi, leaving ChatGPT over 2,000 miles behind.

These patterns confirm a central lesson from the ORCA Benchmark: AI progress is uneven. Understanding these patterns helps anyone interpret what these systems can reliably do in real-world tasks.

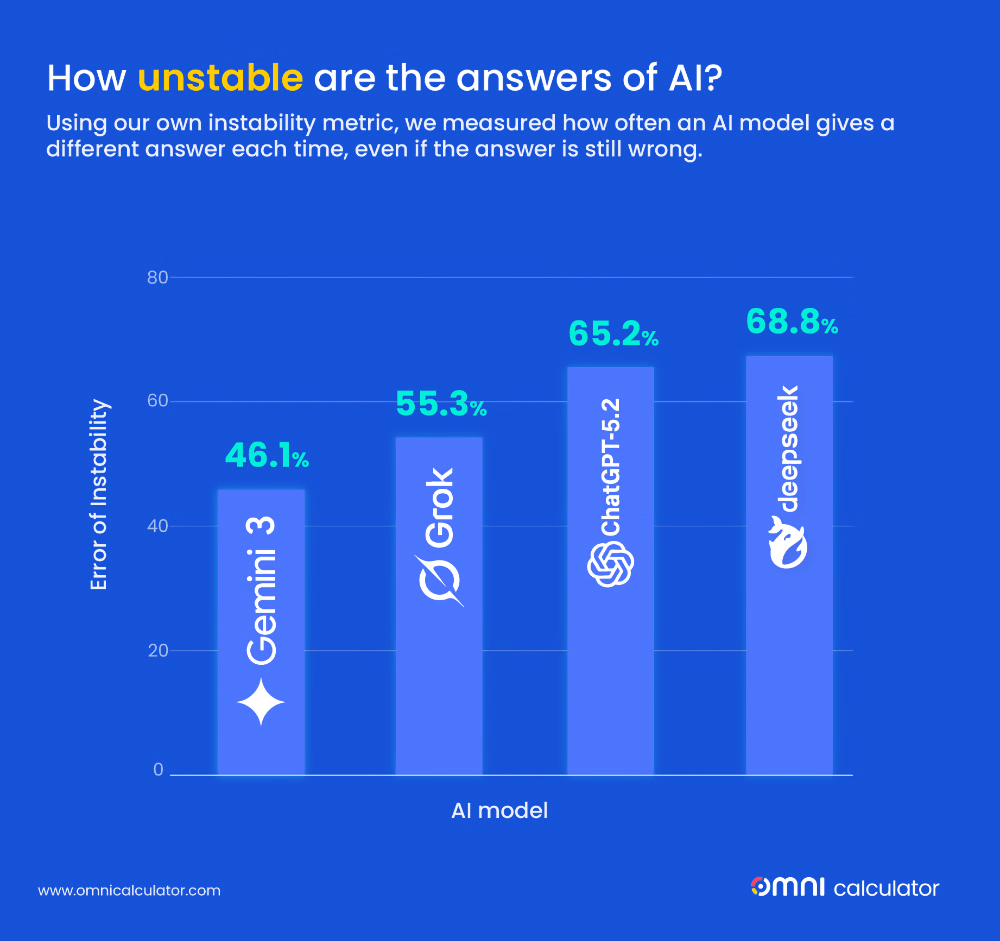

One crucial measure of AI performance is not just whether an answer is right or wrong, but how stable those answers are. We call this the instability metric. It looks at how often a model changes its output from one attempt to the next, even when the answer remains incorrect.

This behavior can happen in three ways:

- A wrong answer changes to a different wrong answer;

- A correct answer becomes wrong; and

- A wrong answer becomes correct.

The first two cases highlight why AI cannot yet replace a traditional calculator. You might get an answer that looks plausible, but it could change if you ask the question again.

For example, ChatGPT changed 65% of persistent incorrect answers, even though the new answer was still wrong. Gemini was more stable at 46%, Grok changed by 55%, and DeepSeek was the least stable at 69%.

Large language models are probabilistic systems. They do not calculate answers in the traditional sense. Instead, they predict the most likely next token based on patterns learned from data. As a result, their outputs are not strictly deterministic. In theory, if you were to test a model like Gemini hundreds of times on the same question, it is mathematically possible for it to produce an incorrect response every single time, even if it often gets the answer right. Each response is generated anew, influenced by probabilities rather than fixed rules.

A calculator works differently. Given the same input, it will always return the same output. There is no variation and no reinterpretation of the problem.

So while AI can provide correct results, it is far less predictable than a calculator. Calculators give the same result every time, whereas AI answers can drift, sometimes correcting themselves, sometimes creating new mistakes. Users need to keep this in mind when relying on AI for quantitative tasks.

AI is improving, but not evenly. Some models are leaping ahead in certain domains, while others make steady but modest gains. The most advanced systems are cleaner and easier to read, yet simple calculations still challenge even the best models.

For anyone relying on AI, this means that these tools can assist and guide, but they are not perfect substitutes for careful calculation. The progress we see today is promising, but it also reminds us that AI remains a tool that needs human oversight.

Looking ahead, the uneven pace of improvement suggests that the next generation of models will be stronger in some areas and less so in others. Watching how these trends develop will be key to understanding what AI can truly do in everyday tasks.