The Lagrange error bound calculator will help you determine the Lagrange error bound, the largest possible error arising from using the Taylor series to approximate a function. And what's more, this article will show you:

- What the Lagrange error bound is;

- How it relates to the Taylor remainder theorem;

- The Lagrange error bound formula; and

- A worked-out example of how to calculate the Lagrange error bound.

🙋 Looking for other calculators on number series? Why not give our harmonic series calculator a try?

How to use the Lagrange error bound calculator

The Lagrange error bound calculator is easy to use! Simply enter your Taylor series problem's values into their corresponding fields:

- Enter the number of terms from the Taylor series (also known as the resulting Taylor polynomial's degree) into

n. - Enter the -value at which you're evaluating the error, into

x. - Enter the polynomial's center in the field labelled

a. - Enter the maximum value of the -th derivative of the function you're approximating, , into the field labelled

M.

The Lagrange error bound, , will be presented at the bottom of the calculator.

Now, if you find yourself asking questions such as "What is the Taylor series?" or "What is the Lagrange error bound?", then read on!

What is the Lagrange error bound?

The Lagrange error bound is the upper bound on the error that results from approximating a function using the Taylor series. Using more terms from the series reduces the error, but it's rarely zero, and it's hard to calculate directly. The error bound tells us what the largest possible error is. The Lagrange error bound formula is derived from the Taylor remainder theorem.

💡 The Lagrange error bound can be seen as a margin of error for the Taylor series approximation. If you want to learn more about that topic, head over to our margin of error calculator.

A short introduction to the Taylor series

If we have some function , then we can perfectly replicate it using an infinite sum of terms, each expressed using a higher-order derivative of . This endless sum is called the Taylor series.

Let's clarify the above with a mathematical definition — any function can be replicated by this infinitely long summation:

where:

- is the -th derivative of the function ; and

- is any point of our choosing, as long as is infinitely differentiable at .

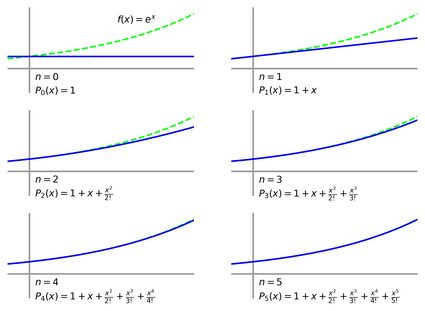

Below, you can see the Taylor series in action, as it approximates with more and more terms, i.e., as the value of becomes higher and higher:

Isn't that amazing? With only a 5th-degree polynomial, we can already approximate to a high degree of accuracy.

However, we now see a problem: the Taylor series' definition is only true if we use an infinite number of terms. If we stop anywhere short of infinity — and as mortals, we must — then we'd only have approximated with the Taylor polynomial, :

Because is just an approximation of , there will be a remainder, :

What is the value of ? We can't know for sure because there are countless terms to calculate. We do know that the more terms we include from the series (i.e., the higher the polynomial's degree, ), the better we approximate with , and the smaller that error will become.

Lucky for us, there is an upper bound on , a worst-case-scenario estimate of the error. It's called the Lagrange error bound, and this calculator and text is all about finding it.

💡 Errors aren't desirable, but measuring them is the first step to fixing them! Try our relative error calculator to get started.

How do I calculate the Lagrange error bound?

The Lagrange error bound formula is as follows:

where:

- — Lagrange error bound;

- — Where we're determining the error between and ;

- — Where the polynomial is centered;

- — Number of terms from the Taylor series we're including, and thus the degree of the resulting polynomial; and

- — Largest value of for all between and :

Using , we can place a bound on :

which simply states that cannot be larger in magnitude than , thereby providing an upper bound on any error that might result from the approximation of .

That's enough formulae — let's look at an example of the Lagrange error bound and how it's calculated.

Calculating the Lagrange error bound: an example

Let's find the error bound for the function at when approximated by a 4th-order Taylor polynomial centered around .

Right off the bat, we have some values ready to be plugged into our Lagrange error bound formula:

- ;

- ;

- ; and

- .

The hardest part will be determining , the largest value of the th differential of within our interval . Let's find it.

The higher-order differentials of are given below:

Order | Differential |

|---|---|

1 | |

2 | |

3 | |

4 | |

5 |

Great! . Now we need to know what the biggest value of can be at any point in the range . We could use some fancy mathematics for this, but we can also reason that

- ;

- ; and

- is strictly descending over the range .

So, the largest value of in our range of should be closer to , and therefore .

Now, we have everything we need to determine :

Thanks to this result, we know that our error cannot be greater than 0.000284. Let's see if that's true:

The Lagrange error bound is correct! And what's more, if we took the same steps for other values of , we can see how the error bound decreases as we approximate better:

And there you have it. We've shown you how to calculate the Lagrange error bound — now it's your turn!

FAQs

What is the Lagrange error bound for f(x) = 1/(1-x) at x=0.1 with a 2nd-order Maclaurin series?

The Lagrange error bound is 0.0015. We can calculate it with the following steps:

- Find the values for

a,x, andn:a = 0(since we're using the Maclaurin series);x = 0; andn = 2.

- Find the largest value of the

(n+1)th derivative offanywhere betweenaandx. For us, this is9— you can do the math to see why. - Plug these values into the Lagrange error bound formula.

What is the Taylor remainder?

The Taylor remainder is the difference between the Taylor series approximation of a function and the actual function itself. Because the Taylor series is infinitely long, we cannot calculate it conclusively, and it will always remain an approximation of the original function. However, we can put an upper limit on the Taylor remainder, which is called the Lagrange error bound.

What is M in the Lagrange error bound?

M represents the largest magnitude value that the (n+1)th derivative of the function f can take on between points a and x when you're approximating the value of f at x with an nth degree Taylor polynomial centered at a.